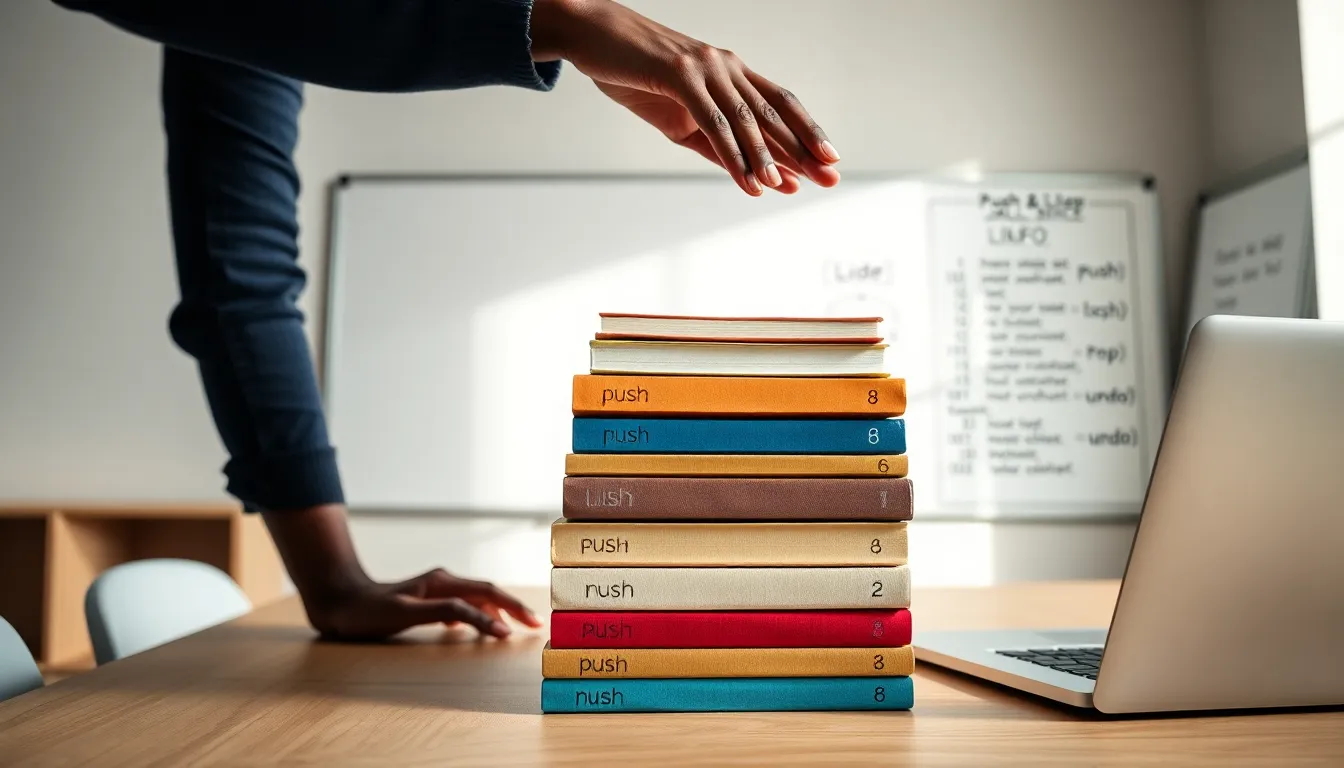

A stack is simple, immediate, and surprisingly powerful. It acts like a physical stack of plates where the last plate placed on top is the first one removed, and that Last In First Out behavior solves a wide range of problems in programming and systems design. This article explains what a stack is, why it matters, and how to carry out and use it effectively. Readers will leave with clear rules of thumb, practical examples, and a few gotchas to avoid, delivered with a light touch of humor but a professional focus on what actually matters.

What Is A Stack And Why It Matters

A stack is a linear data structure that follows Last In First Out semantics. It stores elements so that the most recently added element is the first one removed. Engineers reach for a stack when they need temporary storage with strict order. It matters because it maps directly to many real world and computational tasks such as function call tracking and expression evaluation. Designers often prefer a stack when they want predictable push and pop behavior without the overhead of full random access. The concept is simple yet it underpins essential systems from compilers to undo features in editors. When someone learns stack behavior they gain a tool that clarifies both algorithms and architecture.

Core Operations And Behavior

A stack supports a small, focused set of operations. Typical operations are push to add an element and pop to remove the most recent element. A peek or top operation reads the most recent element without removing it. An isEmpty check reports whether the stack currently holds any items. A size operation returns how many items are stored. These operations run in constant time for common implementations, enabling efficient, predictable performance. Error conditions include popping from an empty stack or pushing to a bounded stack that is full. Implementations commonly throw or return an error to indicate underflow or overflow. Correct use of these primitives preserves the LIFO invariant and keeps higher level logic straightforward.

Implementing A Stack

A stack can be implemented in multiple ways depending on constraints such as memory, performance, and simplicity. Two common approaches are array based and linked list based implementations. Each approach has tradeoffs and fits different scenarios. The choice often comes down to whether constant time indexing or flexible sizing is more important. Practical implementations pay attention to edge cases such as empty checks and resizing policies.

Array-Based Implementation

An array based stack stores elements in a contiguous block of memory. It tracks an index or pointer representing the top position. Pushing increments the pointer and writes the value. Popping decrements the pointer and returns the value. This approach delivers excellent cache locality and constant time operations while capacity is sufficient. Capacity limitations require either rejecting pushes when full or resizing the underlying array. Resizing usually doubles capacity to amortize the cost of copies. Careful code prevents off by one mistakes when incrementing and decrementing the top pointer.

Linked-List Implementation

A linked list stack stores each element in a node that points to the next node below it. Pushing creates a new node that becomes the new top. Popping removes the top node and returns its value while updating the top pointer. This approach supports truly dynamic size without resizing cost and avoids the need for contiguous memory. Memory overhead per element is higher due to node pointers. Developers must manage allocation and deallocation carefully to avoid leaks. Concurrent scenarios sometimes favor this approach when lock free algorithms rely on pointer swaps.

Language-Specific Examples (Pseudo, Python, Java)

In pseudocode a minimal stack looks like: push(x) top index increment and set element: pop() return element at top index then decrement. In Python a list can act as a stack with append and pop methods providing push and pop respectively. In Java an ArrayDeque or custom class with an array and index provides efficient stack behavior. In each language it is important to honor invariants such as updating size consistently and handling empty stack errors in a clear way. Libraries often provide robust, well tested implementations so prefer standard containers for production when available.

Common Applications And Use Cases

Stacks appear in many domains because LIFO ordering maps naturally to layered or nested problems. They are small but pervasive building blocks in software. Below are core applications that illustrate why a stack is a go to structure.

Expression Evaluation And Parsing

Compilers and interpreters use stacks to evaluate arithmetic and logical expressions. They convert infix notation to postfix using stacks for operators and operands. Parsing algorithms for parenthesis matching and syntax checking push opening tokens and pop when a matching closing token is found. Expression evaluation with a stack is both space efficient and straightforward to carry out. When expressions become more complex stacks keep local context isolated so the rest of the program stays simple.

Function Call Management And Recursion

Runtime environments carry out call stacks to track function invocation state. Each call pushes a frame containing return addresses local variables and arguments. When the function returns the frame is popped and execution resumes at the saved address. Recursion relies on the call stack to maintain distinct variable instances for each invocation. Stack overflow occurs when recursion or nested calls exceed available stack memory. Systems designers must balance stack size and recursion depth expectations to avoid runtime failures.

Backtracking, Undo Systems, And Navigation

Backtracking algorithms such as depth first search use an explicit stack to store alternative paths. Undo and redo features in applications push user actions onto a stack then pop them to revert changes. Web browsers carry out a navigation history using a pair of stacks to move back and forward between pages. These examples show how stacks maintain a recent history in a way that makes reversal operations natural and cheap.

Advanced Variations And Concepts

Stack design evolves to meet constraints such as limited memory concurrency and persistence. Variations include bounded stacks with fixed capacity resizable stacks that grow as needed and persistent stacks that preserve previous versions. Concurrent systems introduce thread safe and lock free stacks to support multi thread access without global locking. Understanding the memory model and tradeoffs helps engineers pick the right variant.

Bounded, Resizable, And Persistent Stacks

A bounded stack enforces a hard capacity which is useful in embedded systems with fixed memory. A resizable stack grows and shrinks to fit demand which simplifies usage at the cost of occasional reallocation. Persistent stacks keep old versions intact after operations, enabling time travel like undo or functional programming patterns. Implementing persistence often uses structural sharing to minimize copying and manage memory efficiently.

Thread-Safe And Lock-Free Stacks

Thread safe stacks protect operations with locks to prevent concurrent corruption. Lock free stacks rely on atomic primitives such as compare and swap to achieve concurrency without blocking. Lock free algorithms reduce contention and avoid priority inversion but they are more difficult to carry out correctly. Testing under stress and reasoning about memory ordering is essential when deploying lock free stacks in production.

Stack Versus Heap And Memory Considerations

The stack and heap refer to different memory regions and serve different purposes. The call stack stores function frames and local variables with automatic lifetime. The heap holds dynamically allocated objects whose lifetime is controlled explicitly. Stack memory allocation is fast and predictable while heap allocation is more flexible but expensive. Choosing where to place data affects performance and safety. Large objects and variable sized buffers commonly belong on the heap to avoid exhausting limited stack space.

Performance, Complexity, And Tradeoffs

Using a stack typically yields O(1) time for push pop and peek operations in typical implementations. Space cost is linear in the number of stored elements. Resizing arrays introduce occasional O(n) operations but amortized cost remains constant. Linked list implementations add pointer overhead and reduced cache locality which can impact real world speed. Tradeoffs often involve memory usage versus allocation overhead and concurrency requirements. Engineers choose the flavor of stack that aligns with system performance goals and operational constraints.

Time And Space Complexity Of Operations

Push pop and peek are constant time operations in both array and linked list stacks. Space complexity is O(n) for storing n elements regardless of implementation. Resizing strategies affect amortized analysis but not the per operation complexity in steady state. Worst case memory usage for a bounded stack is its capacity while for a dynamic stack it is proportional to the maximum size reached during execution.

When To Use A Stack Versus Other Structures

A stack is the right choice when LIFO semantics match the problem such as nested scopes or recent history. A queue fits better when first in first out ordering is required. When random access or priority ordering is necessary an array or priority queue is a better fit. For persistent history with branching a tree or graph might be preferable. Choosing the simplest tool that preserves invariants usually leads to clearer and faster code.

Best Practices And Common Pitfalls

Designing stack APIs and implementations treats edge cases with care. Clear contracts for error conditions and capacity changes help clients use the stack safely. Avoiding subtle bugs requires attention to off by one errors and consistent state updates. Memory leaks and unfreed nodes in manual memory environments cause long lived problems. Testing common sequences and extreme conditions reveals issues early.

Design And API Recommendations

Expose a minimal API: push pop peek isEmpty and size. Document behavior on underflow and overflow explicitly. Prefer returning optional values or throwing predictable exceptions rather than returning sentinel values. Consider providing both bounded and unbounded variants when the environment has strict memory limits. Use existing standard library containers where possible to reduce maintenance burden.

Avoiding Overflow, Memory Leaks, And Off-By-One Errors

Always check capacity before pushing on bounded stacks. Free or release nodes promptly in manual memory environments. Write unit tests that exercise empty stacks one element stacks and near capacity conditions. Review index arithmetic to prevent off by one mistakes especially when top points to the next free slot or the current element. Static analysis and sanitizers detect many common errors during development.

Conclusion

A stack is a compact concept with broad and lasting impact on software design. It gives predictable performance simple mental models and direct implementations for nested and recent history problems. They are easy to carry out yet subtle bugs crop up without disciplined testing and clear APIs. When engineers pick the right stack variation and follow best practices they get reliable, efficient behavior in compilers runtimes and applications. The stack remains one of the most practical and instructive data structures to master.